I think a lot about assertions, things that people assert as true, very often without acknowledging their personal bias. To be fair, most of us are so immersed in our ideologies that we’re not aware of how they are compelling us toward bias.

The title of this post refers to some of the kinds of assertions I hear, by which someone states something as a fact:

- The way things are — some assertion about fact, whether it has to do with science, economics, politics, or some other sphere. One of my favorite manifestations is when someone begins an utterance with the stark word “Fact,” followed by a colon to emphasize the factiness of what follows, then followed by an unquestioned assertion.

- The way things were — some statement about history or the past. For example, such and such Egyptian dynasty ruled in such and such time period, or some assertion about why humans came down from the trees to live on the savanna.

- What is true — This is really akin to the other two kinds of assertions I’m pointing to, but maybe in this case I’m thinking about an assertion that goes beyond a mere statement of some fact. Some examples might be that God exists or that he doesn’t, or that evolution is an incontrovertible fact.

An assertion might be well supported, but what I’m trying to spotlight here is the common practice of making an assertion without acknowledging the background and context surrounding the assertion and the person making it. One result is that people get into fierce arguments even though they aren’t really arguing about the same thing.

Here are some of the kinds of influences that one might make clear to provide context to an assertion:

- The lines of evidence behind the assertion — Is the assertion based on scientific or scholarly research? Sometimes a speaker will make an assertion, basing his or her statement on the consensus within a profession or academic field. (Academic or scientific consensus doesn’t always mean the same thing as the everyday understanding of what constitutes a consensus.) One of the problems here is that there may actually be a minority that disputes the consensus view. There might be a legitimate critique that isn’t getting acknowledged when the speaker makes the assertion.

- Assumptions — Many assertions are based in part on ideas or constructs that are taken for granted. As with lines of evidence, there might be a legitimate minority critique of a given assumption. One example would be dating a past event based on the conventional chronologies hypothesized by historians and archaeologists.

- Definition of terms — Often people get into arguments without establishing and agreeing on the meaning of the point they are discussing. For example, people argue about whether evolution is true without coming to a prior understanding of what they mean by evolution.

- The ideological leanings of the speaker — For someone who wants to evaluate an assertion, it could be useful to know something about the speaker’s ideological convictions. Is the speaker a theist? An atheist? A free-market fundamentalist? An eco-socialist? One problem here is that many people don’t like to admit that they subscribe to an ideology or aren’t even aware of it.

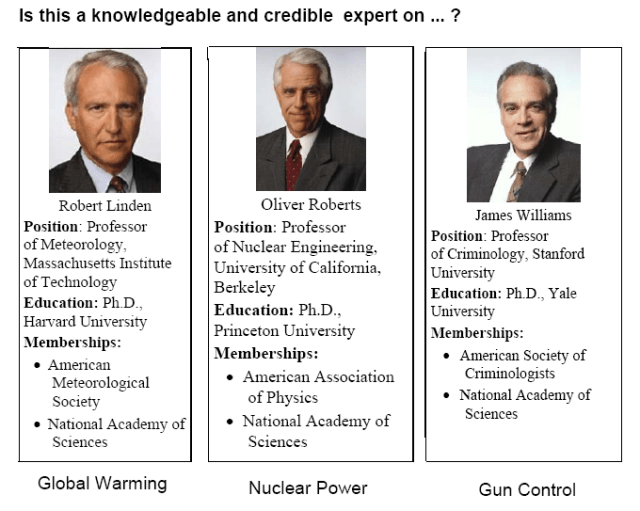

- The speaker’s authority for making the assertion — When evaluating an assertion, it can be useful to know the speaker’s credentials.

- The speaker’s underlying agenda — As with ideology, many speakers don’t like to own up to their agendas, which are often political or ideologically-driven.

As is often the case with this writing project, my purpose here is to set out some basic ideas with the intention of coming back later to revise and add ideas and examples.

ARB — 3 Oct. 2013